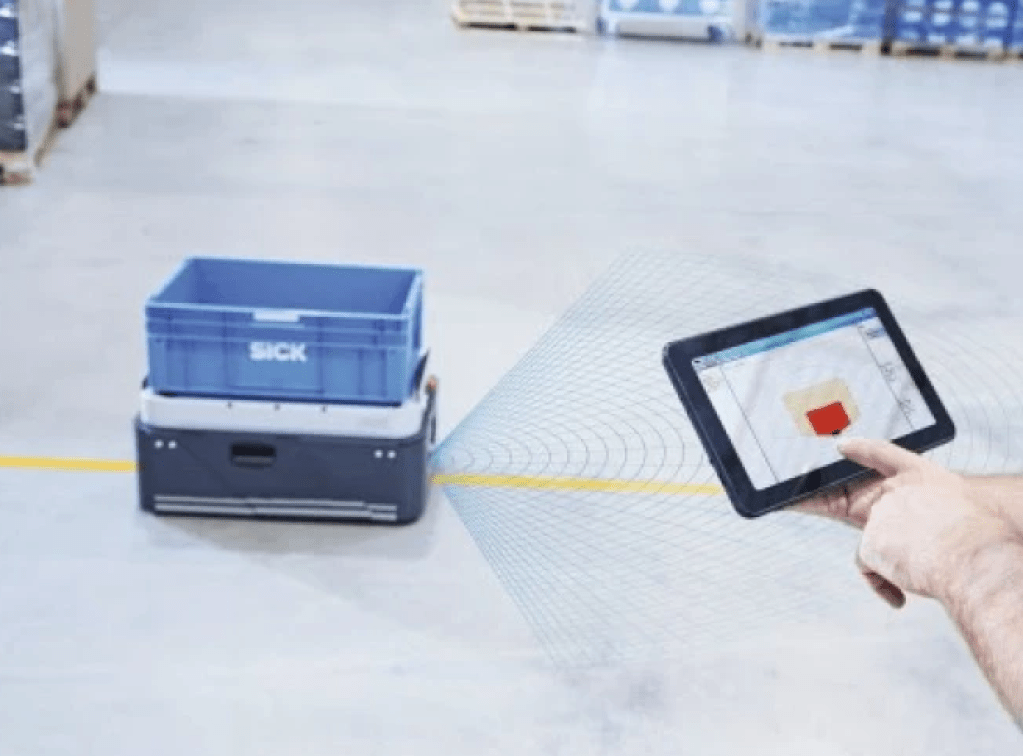

Sick has unveiled the compact scanGrid2 sensor that uses new self-developed solid-state LiDAR technology to increase productivity

Sick has unveiled the compact scanGrid2 sensor that uses new self-developed solid-state LiDAR technology to increase productivity, especially of small guided autonomous transport vehicles, known as Automated Guided Carts or AGC.

Certified as a type 2 / SIL 1 safety sensor according to IEC 61496-3, the scanGrid2 is used to protect hazardous areas with performance level (PL) as well as for collision avoidance. The programming app and the cloning function also offer convenient use and quick commissioning of the sensor.

“We want to offer, especially to manufacturers of small guided vehicles, a cost-effective safety solution with which they can increase the productivity of their applications. In particular, this means increasing the speed or loading of the vehicles, or being able to eliminate mechanical barriers, such as perimeter fences, ”explains Marco Faller, director of product strategy at Sick AG.

Ultimation Industries introduces warehouse robots to give companies of all sizes more flexible material handling solutions

“AMRs have become common in large distribution companies such as Amazon and some Fortune 500 companies. But new technologies are making it easier and more affordable to deploy autonomous robots in companies of all sizes as a complement to other types of material handling equipment,” said Richard Canny, president of Ultimation Industries.

Ultimation is partnering with Denmark’s Nord-Modules to expand its portfolio of fast-to-market, productivity solutions. The strategic partnership will grow Nord-Modules’ market share in North America, leading to future opportunities for local assembly and manufacturing to suit local customer requirements.

Nord-Modules delivers reliable robotic top modules for warehouse robot load solutions. These units provide superior returns on investment through optimal speed, space and flexibility and can be fitted to various AMR solutions.

According to Canny, warehouse robots are a good option for manufacturers and distributors of all sizes who need to transfer loads within their facilities quickly and without reconfiguring production lines or factory footprints. They can be deployed wherever and whenever needed, interfacing with existing conveyor systems and providing flexibility as volumes change.

Nord-Module’s Quick Mover 180, for example, uses an autonomous robot base with a flexible top module that performs multiple material handling tasks and handles a plethora of materials—gates, half pallets, plastic boxes, carton boxes, carts and cobot solutions. Without changing top modules, manufacturers have the ability to integrate the Quick Mover with MDRs to:

- Transport multiple types of containers from point A to B to C.

- Pick up goods from gates and deliver to a drop-off point.

- Precisely re-position goods to another automation area (e.g., a CNC machine).

Autonomous freight trucks an upgradeable technological reality

Plus (formerly Plus.ai), a leading company in self-driving truck technology, announced today that it will equip the next generation of its autonomous truck driving system with the NVIDIA DRIVE Orin™ system-on-a-chip (SoC). The company plans to roll out this next-generation system in 2022 across the U.S., China and Europe.

Plus is starting mass production of its autonomous driving system for heavy trucks this year, and will expand its feature set and operating design domain over time through over-the-air software updates. By working closely with the NVIDIA engineering team to further evolve its system, Plus will make it possible for trucks powered by its system to achieve fail-operational performance for greatest on-road safety.

“Enormous computing power is needed to process the trillions of operations that our autonomous driving system runs every fraction of a second. NVIDIA Orin is a natural choice for us and the close collaboration with the NVIDIA team on a custom design for our system helps us achieve our commercialization goals. We have received more than 10,000 pre-orders of our system, and will continue to develop our next-generation product based on the NVIDIA DRIVE platform as we deliver the systems to our customers,” said Hao Zheng, CTO and Co-founder, Plus.

The Plus autonomous driving system is designed to make long-haul trucks safer and more efficient. Because of the size and weight of heavy trucks, which can total 80,000 pounds with a fully-loaded trailer, they need more time to come to a stop and to maneuver. Plus’ system uses lidar, radar and cameras to provide a 360° view of the truck’s surroundings. Data gathered through the sensors help the system identify objects nearby, plan its course, predict the movement of those objects, and finally control the vehicle to make its next move safely.

The unrivaled compute power of NVIDIA Orin, which can deliver 254 trillion operations per second, is ideal for handling the large number of concurrent operations and supporting sophisticated deep neural networks to process and make decisions using the data on heavy trucks outfitted with the Plus autonomous driving system. Orin is also designed for ISO 26262 Functional Safety ASIL-D at the system level – vital for safety-critical applications like self-driving.

“Plus and its automated trucks are delivering true social benefits today through improved safety and efficiency,” said Rishi Dhall, vice president of autonomous vehicles, NVIDIA. “With NVIDIA DRIVE Orin, Plus’ next-generation automated system will raise the performance bar even higher.”

Start-ups are introducing next-generation virtual meeting software

Washington Post: (February 2021) Some tech companies have said people can continue to work from home indefinitely. Surveys suggest that most others are contemplating hybrid workspaces where staffers rotate between working remotely and coming into the office. The possible post-coronavirus situation has some companies envisioning a future in which people can collaborate in more interactive and engaging ways, whether they’re on-site or at home. One novel approach is to use 3-D holograms.

Last month, Canada-based ARHT Media launched HoloPod, a 3-D display system that beams presenters into meetings and conferences they otherwise might not be able to attend. That same month, the 3-D graphics company Imverse was recognized at the global tech conference CES for software that enables hologram collaboration within virtual meeting rooms. Last year, Spatial enabled holographic-style virtual meetings on Oculus Quest.

Others are racing to develop similar Web conferencing capabilities under the notion that holograms are more engaging to work with than tiles of faces on a computer screen. On the fringe for years, workplace holograms would give employees the ability to read body language and other physical reactions in cyberspace. The digital illusions might also foster greater collaboration and communication among colleagues unable to interact in the real world.

“As you look to that hybrid model, companies are going to have to innovate around that interplay between the remote employee experience and in-office employee experience,” said Lisa Walker, the vice president of brand at Fuze, a teleconferencing service. “The technologies that can solve for that are going to pop.”

A January workplace survey by PWC found that most executives and employees expect a hybrid workplace to kick off in the second quarter of this year. A separate survey by the National Association for Business Economics found that only 11 percent of the employees are expected to return to their pre-pandemic working arrangements. Corporate travel is expected to remain slashed.Holograms might not be the next big thing, but start-ups in the 3-D space are positioning their offerings just in case.

The three-dimensional light projections have primarily been seen re-creating musicians onstage in recent years. Companies have wanted to bring them into homes, but the projection hardware is still too expensive for most people to afford. Companies, on the other hand, have larger budgets. And now software advancements are unlocking ways to use laptops, computers and smartphones to engage with and stream holograms emitted elsewhere.

Smart Industry and Supply Chain using VR / AR integrated with IoT

January 2021.- With Augmented Reality being incorporated for so much more technical work, it just makes sense you would want to close the loop using IoT and sensors for feedback and Artificial Intelligence to make the system more immersive and user friendly. The AR can help walk the user through an activity. Whether you are using the technology to speed up bin-picking or giving directions for assembly.

The AR using tags on the equipment for locating speeds up the process and also add an element of error proofing by integrating the forward-facing camera to verify that where something is being placed is confirmed. The IoT loop verifies the activity and using the AI to learn from errors as to why the instructions or indicators were confusing really improves the instruction set for all tasks.

Some of the systems such as pick-by-vision (3) are looking to show metrics that benefit the company in the two main factors, accuracy and speed. The systems are of course dependent on the inventory management systems and the warehouse location system into which you will integrate. The pick-by-vision systems are using augmented reality which allows the actual vision of the individual, will be the base for which all the other information systems will overlay. It overlays mapping information, directions, photos of the item to be picked, and bar codes to be compared. The great part of the systems is the can be real-time and improved through AI.

Much of the success and failure of these systems comes through the quality of the vision system and the cameras. Of course the higher the quality, the more data and slower the response time can be unless your complete system is upgraded to utilize the data from the camera. This is also why many of the systems opt for cheaper GPS technology and scanning tags. These can allow for lower resolution but the trade-off is the finite analysis the higher quality vision systems will offer. The displays don’t have to just be glasses style, they can be a smartphone or tablet but then you take away the hands-free advantage. The image is scanned and compared against a database and orientation is also determined in order to factor in directions and mapping. As you approach your location the more detail you receive.

When using a slower system or an interface that has a lot of detail some issues can occur such as the person using the device may walk past the location because the feedback took too long. This is a problem and one you need to address early on in order to make sure your system is robust enough to handle the interface.

The vision systems give you the advantage of recognizing and comparing location based on visual cues. The simpler versions use tags similar to the QR tags. These scale the distance based on a known location and size of the tag. This can be confusing though as the angular distortion and change of position can take away some of the accuracy.

Determining the picking order or assembly order is where most of these tools really excel and make these tools more than a glorified laptop, tablet, or smartphone; Hi-tech programs that take information from IoT and then use the positioning data to determine the optimized flow really capitalizes on the tools system but next they can use AI to learn the best flow sequence.

The key for much of these systems is that you need the feedback loop from the IoT, sensors, or tags. This must be communicated elsewhere to compare and feedback with information. The glasses don’t have enough on board to act alone. In warehouse systems the keys mainly do you pick the right part and how long did it take? In assembly systems, these AR and VR tools can integrate to produce an obstruction that isn’t there but is anticipated to be installed. This way you can run analysis checks and ergonomic assessments prior to installing the equipment that will be causing the obstruction.

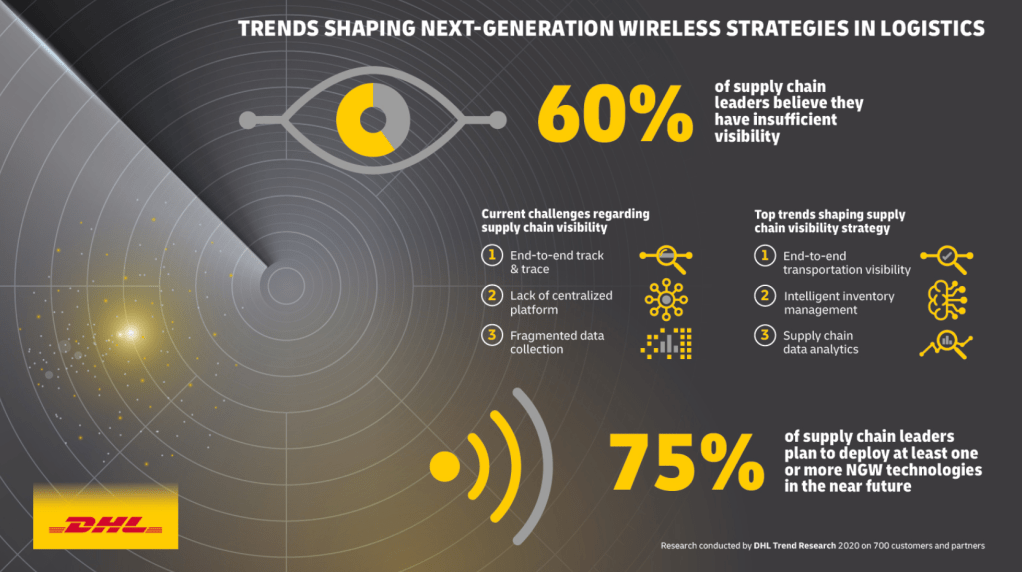

DHL’s Trend Report outlines the potential of Next-Gen Wireless in Logistics

June 2020.- The evolution of wireless networks and the Internet of Things (IoT) has revolutionized the world, and its emergence in the logistics industry has brought about a paradigm shift.

Wireless communication technology was making headlines way before the current COVID-19 crisis. Much of the recent interest has focused on 5G mobile data networks that are being rolled out in many countries. 5G promises a host of benefits for end users, businesses, and telecommunications systems operators alike, including higher speeds, greater capacity, and tailored services for a new generation of smart connected devices. Beyond 5G, progress across a wide range of different wireless communication technologies is now creating new opportunities for logistics to improve visibility, enhance operational efficiency, and accelerate automation. Well-known technologies like WiFi and Bluetooth and lesser-known technologies like Low Power Wide Area Networks (LPWAN) and Low Earth Orbit (LEO) Satellites have been enhanced for industrial use. These next-generation wireless technologies will enable the next step in the communication revolution, moving towards a new world in which everyone and everything can be connected everywhere. In a future where everyone and everything is online everywhere, three key things will become possible for the logistics industry:

- Total Visibility: Every shipment, logistics asset, infrastructure, and facility will be connected thanks to widely available networks and inexpensive high-performance sensors. This will enable highly efficient automation, process improvement, swifter and more transparent incident resolution, and – ultimately – the best service quality for both B2B and B2C customers.

- Wide-Scale Autonomy: All autonomous vehicles, whether indoor robots or logistics vehicles on public roads, rely on ultra-fast, reliable wireless communication to navigate and traverse their worlds effectively. While these solutions are on the rise today, next-generation wireless will be one key enabler driving their widespread use and moving the world to autonomous supply chains.

- Perfecting Prediction: With so many things online, the volume, velocity, and variety of data that we collect will triple the big data already being generated today. The continued progress of machine learning systems and artificial intelligence paired with the ultra-low latency of next generation-wireless means that data-driven prediction systems for forecasting, delivery timing and routing may no longer be constrained by latency and performance of wireless networks.

Source: Logistic Insider

BMW now working with NVIDIA to advance AI in logistics

May 2020.- BMW is prioritising intelligent logistics robots in its stated objective of using higher performance computer technology across vehicle making operations. Building on the advances it has made over the last four years with digital technology in logistics, the German company is now working with US software company NVIDIA.

Last week the two companies announced a pilot project in which BMW has equipped smart transport robots (STRs) with artificial intelligence (AI), developed on NVIDIA’s Isaac robotics software platform, which optimises material flow and planning processes.

According to BMW the robots can now identify obstacles such as forklift trucks, tugger trains and people more quickly and select alternative roots. They can also learn from the environment and apply different responses to people and objects.

The STRs are one of five AI-enabled robot solutions the carmaker is developing with NVIDIA using the Issac software platform to improve logistics processes. These include both navigation robots to transport material autonomously and robots used to select and organise parts. As first outlined in 2018, the other four are handling robots: SplitBot, PlaceBot, PickBot and SortBot (see box).

Source: Automotive Logistics

Debe estar conectado para enviar un comentario.